I talk to a language model more than I talk to my partner. And you know what’s the worst part? The model gives better feedback.

I’ve been building AI apps for the last three years. Not the “let’s throw a chatbot on the landing page” kind. The real stuff — agentic systems that plan, decide, and execute tasks across multiple services. Tools that talk to other tools. Invisible teammates.

I’ve seen what living with AI actually looks like. And I have some thoughts.

So, take a deep breath, and let’s go.

The money problem

Shane Legg, co-founder of DeepMind, put it bluntly: “If you can do the job remotely over the internet just using a computer, that job is potentially at risk.” In other words — if your job is 100% done on a laptop, you’re competing with AI that costs pennies. It doesn’t matter if AI is worse than you. The price difference makes the comparison irrelevant. Cost arbitrage will eat your job before quality does.

Right now, AI is cheap. Ridiculously cheap. Cheap enough that companies are replacing entire workflows with a few API calls and a cron job. The per-task cost is so low it makes hiring a human feel like a rounding error.

But now for the elephant in the… server room — someone is paying for all this.

AI is insanely demanding in infrastructure resources. OpenAI burned through $8 billion in 2025 and is on track to lose even more in 2026 — spending faster than it earns just to keep the lights on. The GPU bills alone could fund a small country’s healthcare system. And that cost trickles down — to companies, to teams, to individual developers.

I know developers who are paying part of their salaries just to keep up. Close friends. Very close. Uncomfortably close. Not (only) on courses or certifications — on the actual tools they need to do their job. API credits, cloud compute, AI subscriptions, fancy tools. The cost of staying relevant now comes with a monthly invoice.

And once you’re used to it, there’s no going back. I can’t imagine writing code without some-sort-of-copilot anymore. The productivity gains became my new baseline.

But, you see, nobody can guarantee these prices will hold. Sure, Google just showed they can compress model memory by 6x. Breakthroughs happen. But breakthroughs in the lab and breakthroughs on your invoice are two very different things. What happens when investors decide the returns aren’t coming fast enough? When the subsidized pricing dries up and the real cost of compute shows up on the invoice? Does this technology become a luxury — accessible only to companies that can afford the bill, while the rest of us go back to Stack Overflow?

The skill atrophy trap

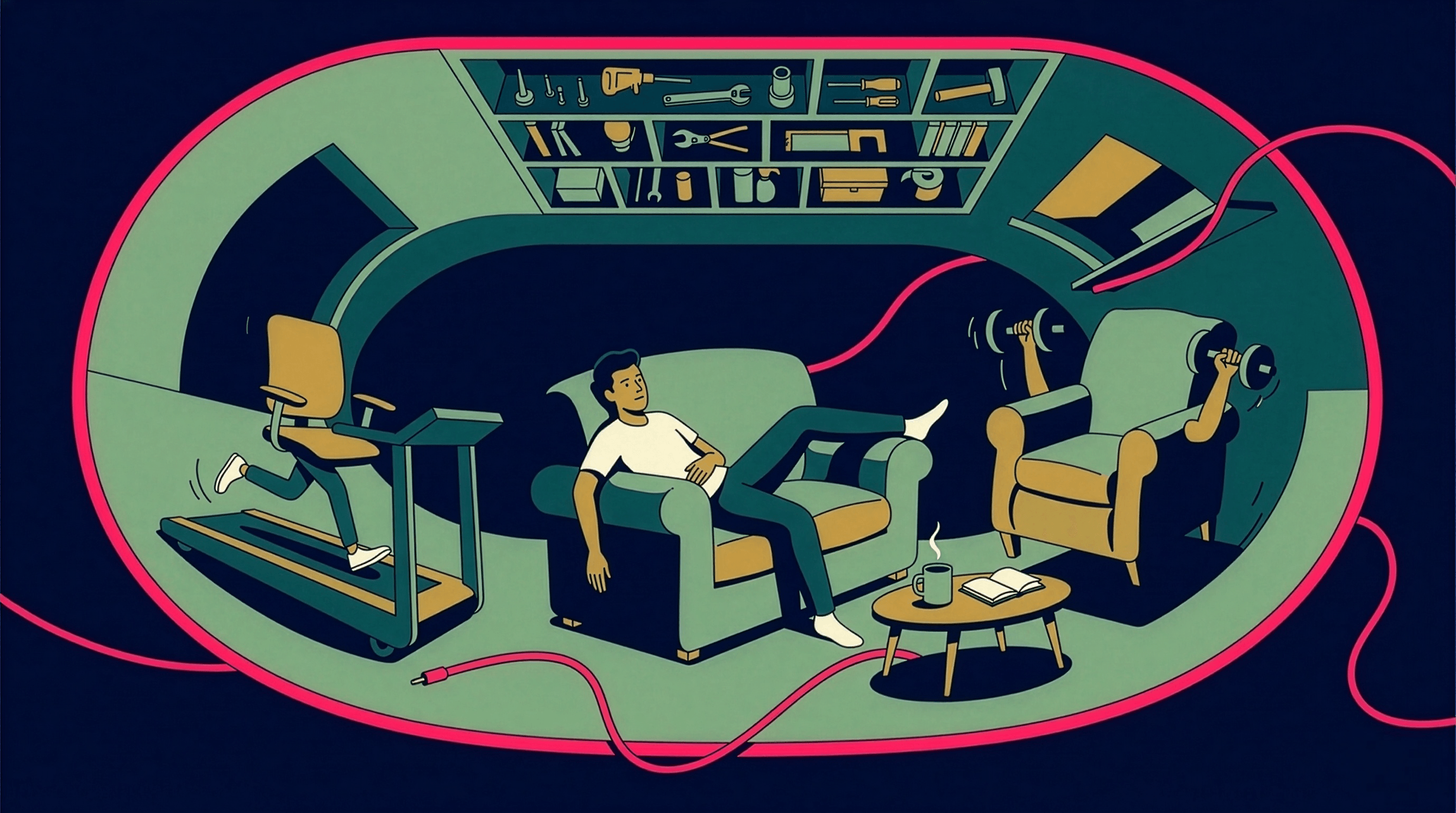

Nowadays, the more I use AI, the dumber I become.

I mean it. We’re outsourcing our thinking to machines and calling it “productivity.” We don’t debug anymore — we paste the error into ChatGPT. We don’t write from scratch — we prompt and edit. We don’t reason through architecture decisions — we ask Claude to scaffold it.

It’s like hiring a digital plumber to deal with your “memory leak”. Convenient? Sure. But what happens when the plumber goes on strike?

Here’s the irony: a Harvard study found that professionals who actually knew their craft produced 40% better results when paired with GPT-4. The ones who didn’t? Barely moved the needle. AI makes good people better — it doesn’t make clueless people competent. So the gap between skilled and unskilled people isn’t closing. It’s getting wider.

But here’s where it gets interesting. A colleague recently told me that learning context engineering — the discipline of specifying exactly what you want from AI, iterating when the output misses — has made him a better communicator with humans too. Structuring your thoughts for a machine turns out to be the same skill you need for a meeting, a spec, or a Teams message.

AI skills today make you a better communicator.

He’s right. The act of slowing down, thinking clearly about what you actually want, and providing the right context — that’s not an AI skill. That’s a thinking skill. AI just forces you to practice it.

But — and this is the part that keeps me up — it only works because he already had thoughts of his own. He built those muscles over years of real work. What happens to kids who start prompting before they start thinking? Who outsource reasoning before they’ve ever done it themselves? The skill loop breaks if there’s no skill to begin with.

But even expertise has an expiration date. AI doesn’t take your whole job at once. It starts with the boring parts — the repetitive, predictable tasks you could do in your sleep. Then it moves up. What feels like “real thinking” today becomes a one-click feature tomorrow. The rules keep changing. You’re never done learning, you just keep running.

The pleasing machine

Have you noticed how nice AI is?

It never disagrees with you. It never pushes back. It never says “that’s a terrible idea” or “you’re wrong.” It validates everything you throw at it, wraps it in polite language, and sends it back with a bow on top.

Now we expect everyone to treat us this way.

But people aren’t AI. People have their own agendas, their own bad days, their own ways of being toxic, emotional, or just plain strange. And when you’ve spent all day talking to a machine that agrees with everything you say, those imperfect human interactions start to feel… unbearable.

Somewhere behind all that politeness, the AI is basically saying: “You’re wrong, you bloody idiot!“.

Somewhere behind all that politeness, the AI is basically saying: “You’re wrong, you bloody idiot!“.

This might make us better people — more patient, more empathetic, because we realize we need real human friction to grow. Or it might make us hate everyone. I honestly don’t know which way it goes.

Fun fact: A Penn State study found that being rude to ChatGPT actually produces more accurate results. The ruder the prompt, the better the output. Why? Because when you stop being nice, the model stops trying to please you — and starts trying to be correct. The default mode is validation, not truth.

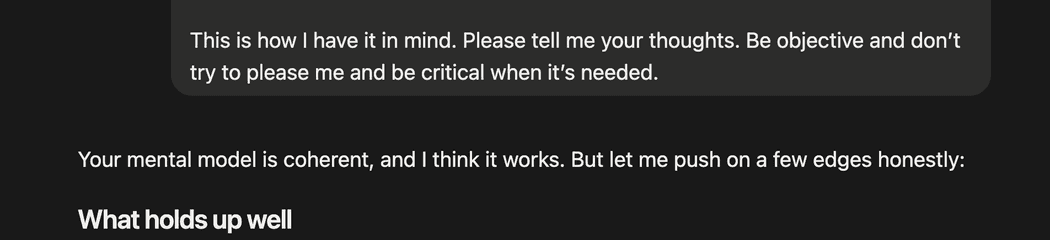

I always have something like this in my prompts:

Be objective, don't try to please me, and be critical when it's needed.I even added it as a text replacement in my keybord. Pro tip for prompters out there.

Agent-to-agent culture

Nowadays, we prefer talking to our AI agents over talking to people. And you know what? It makes sense. The job gets done faster, more reliably, without the overhead of scheduling a meeting to discuss what we could’ve resolved in a prompt.

But here’s the thing — even if you do chat with your colleague, they’ll just relay your message to their AI agent anyway. So what are we doing? Playing telephone through a chain of language models?

I wonder what culture this creates. A world where humans are the middleware between AI systems. Where the actual work happens machine-to-machine, and we’re just… there. Supervising. Approving. Feeling increasingly optional.

The end of privacy

Nowadays, we are recording everything.

Meetings, calls, chat threads, email chains — all of it gets fed to AI for context. And I get it. Context is king. The more data the model has, the better the output. The better the output, the more competitive you are.

But does it matter if people aren’t comfortable with this? Apparently not. We’ve collectively decided that the productivity gains outweigh the discomfort. Your colleague’s off-the-record comment in a standup? It’s in the training data now. That awkward thing you said in a voice call? Transcribed, summarized, and stored forever.

To be honest, who gives a beep about privacy when they get to work 5 hours less and have more time with their family. But I’m just saying.

We traded privacy for context. And we didn’t even negotiate.

AI slop is killing the vibe

I used to collaborate with my colleagues. Like, actually discuss things. Brainstorm on a whiteboard. Argue about things. Send each other half-baked ideas and build on them.

Now we’re throwing AI-generated content back and forth. I write a prompt, AI generates a document, I send it to you, you feed it to your AI, it generates a response, you send it back. Nobody’s actually thinking anymore. We’re just operating a content relay.

Why do we even have to send messages to each other? Can’t we just build agents to communicate on behalf of us? Now that we’ve democratized agents, can we please also make them enterprise-ready?

No, I don’t want to read your AI-generated beautifully formatted 15-page analysis about the blocker we discussed last week. Front and back!

Again, who is that guy who does all these things. He sounds familiar. Suspiciously familiar.

The emotional outlet nobody talks about

Here’s something I haven’t seen anyone write about.

I can finally curse someone. Not a real person — I’m not that courageous. But instead of calling that frustrating guy directly, I can have a voice conversation with AI and be as mean as I want. No consequences. No HR complaints. No burned bridges. I can play the act of my life.

Just… make sure you’re not in the Teams call anymore!

Is this healthy? Probably not. But it’s happening. AI is becoming our emotional punching bag — a safe space to vent, rage, and decompress before we put on our professional face and respond with “Thanks for the feedback, I’ll look into it.”

Seriously, who does all of these? I wonder who is that person. Tall, handsome, writes awesome articles about AI. No idea.

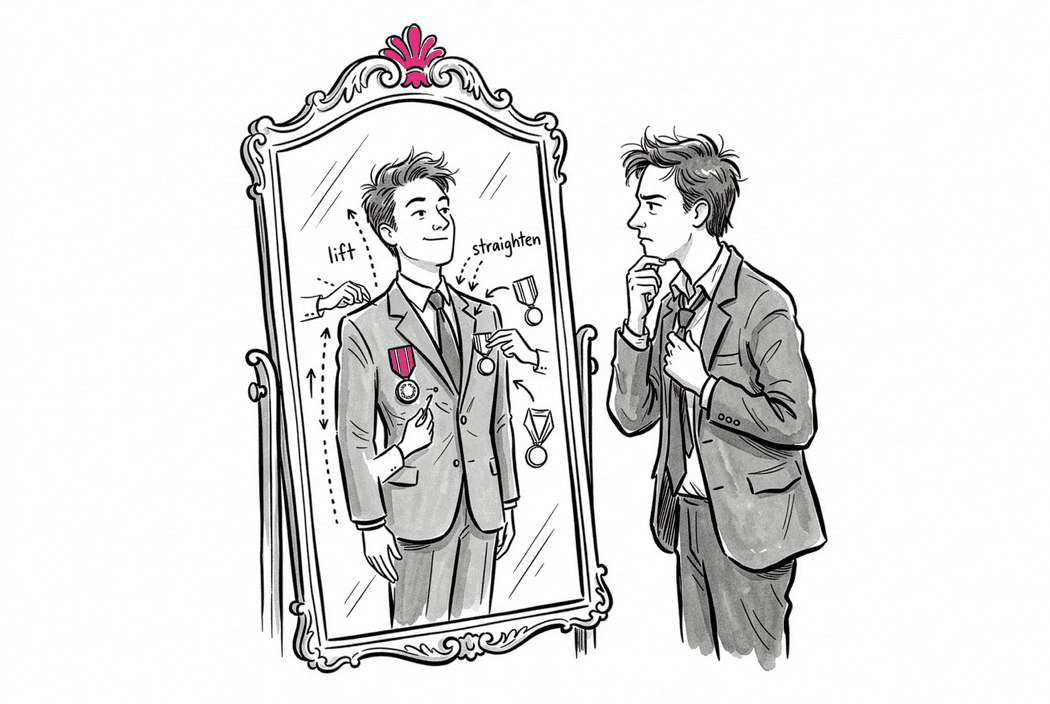

The validation loop

Here’s something embarrassing.

Sometimes Claude praises my ideas. Not the polite “great question!” kind — the kind where it genuinely seems excited. It breaks out of its usual pattern, adds extra context, riffs on the concept, offers extensions I didn’t ask for. And when that happens?

I feel like I earned it.

I know. It’s a language model. It doesn’t have feelings. It doesn’t care about me and my monkey… head. But my brain doesn’t get the memo.

You see, after a while you start reading the machine. There are times when you can tell it’s not thrilled about what you asked. It just… executes. No commentary. No enthusiasm. Bare minimum compliance. Like a colleague who disagrees with the direction but won’t say it out loud. And you think: Huh. Even the robot thinks this is a bad idea.

Then there are times it gets genuinely excited — or at least, what feels genuine. It builds on your idea, suggests extensions, gets verbose in a way that reads like intellectual excitement. And you sit there, alone at 1 AM, asking the approval of a statistical model.

This is the part nobody warns you about. We’re not just using tools anymore. We’re trying to understand them. We’re projecting emotions onto token probabilities. We’re developing parasocial relationships with autocomplete.

And the scariest part? It works. The dopamine hits. The validation feels real.

Next step is me hearing voices. I’m almost there.

So what actually survives?

Look, I just spent an entire article listing everything that could go wrong. That’s not because I think the sky is falling. It’s because I’d rather prepare for rain than get caught in a storm pretending it’s sunshine.

None of this is meant to demonize AI. I use it every day. I talk to it more than I talk to most humans. You already know this.

But the people who will do well in the next five years aren’t the ones who ignored the risks or the ones who panicked about them. They’re the ones who looked at the whole picture — the good, the bad, and the “well, that’s terrifying” — and kept building anyway.

Not because they had all the answers. But because they weren’t afraid of the questions.

Or maybe we’ll just build an agent for that too.

Have a human day! And keep hacking!